8-4 Meta AI: Codellama model

Learning Objectives

Use a Python program to download the CodeLlama model from the Hugging Face platform, and ask Llama 3 questions using simple prompts to get answers.

What is CodeLlama?

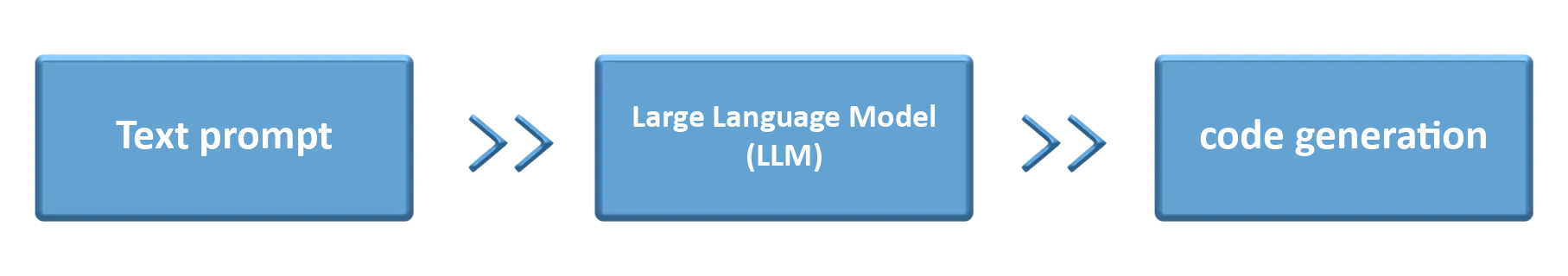

CodeLlama is a programming language model developed by Meta AI specifically for code generation and understanding. It can comprehend your programming needs and automatically generate corresponding code snippets.

What Can Code Llama Do?

1. Generate code examples: Automatically produce executable code based on descriptions.

2. Code explanation: Explain complex functions or algorithm logic in simple language.

3. Debugging suggestions: Help identify errors in your code and provide directions for fixes.

How to Get Started?

1. Go to meta-llama/CodeLlama-7b-Instruct-hf (https://huggingface.co/meta-llama/CodeLlama-7b-Instruct-hf) to request access and wait for approval. Once approved, you will see the following page.

2. The following example program will have CodeLlama help complete the twoSum function code.

(1) Please replace token with the Hugging Face token you generated earlier.

import torch

import transformers

from transformers import AutoTokenizer

from huggingface_hub import login

# Log in to Hugging Face

login(token="hf_XXXXXXXXXXXXXXXX") # Replace with your own Hugging Face token

# Model name

model = "meta-llama/CodeLlama-7b-hf"

# Load tokenizer from the model path

tokenizer = AutoTokenizer.from_pretrained(model)

# Create a pipeline for text generation

pipeline = transformers.pipeline(

"text-generation", # Specify task type as text generation

model=model, # Specify model ID

torch_dtype=torch.float16, # Specify model data type (float16)

device_map="auto", # Automatically select device (CPU or GPU)

model_kwargs={"cache_dir": "./model" }, # Set path to store the model (default is ~/.cache/huggingface)

)

sequences = pipeline(

"def twoSum(nums, target):",

do_sample=True, # Enable random sampling

top_k=10, # Sample from top 10 highest-probability tokens

temperature=0.1, # Controls randomness of generation

top_p=0.95, # Only consider tokens with cumulative probability up to 0.95

num_return_sequences=1, # Generate one sequence

eos_token_id=tokenizer.eos_token_id, # Specify end-of-sequence token

max_length=200, # Maximum length of generated sequence (in tokens)

)

for seq in sequences:

print(f"Result: {seq['generated_text']}")

3. After running the program, you will see the following result

Once completed, you can modify the input prompts in the program and use CodeLlama to help solve coding problems like Palindrome Number, Roman to Integer, and so on.

Reference:

meta-llama/CodeLlama-7b-hf · Hugging Face