7-12 VLM Development

Learning Objectives

VLM (Vision-Language Model) is an AI model capable of understanding both images and natural language. By integrating visual and linguistic information, it can perform multimodal tasks such as image captioning and visual question answering.

To improve the execution efficiency of VLMs, quantization of the LLM (Large Language Model) is a key step. However, some quantization tools cannot be installed directly on Jetson platforms. To address this, NVIDIA provides Jetson containers, which offer compatible container environments tailored for different hardware and JetPack versions, simplifying deployment and accelerating development.

Initial environment setup

// If you encounter any Docker-related errors, please refer to the tutorial in Chapter 5

git clone https://github.com/dusty-nv/jetson-containers

bash jetson-containers/install.sh

Download and launch the image

sudo jetson-containers run $(autotag nano_llm)

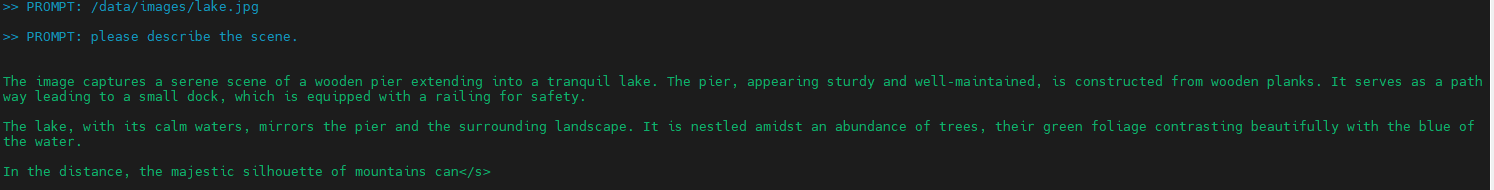

Run VLM

// The results will be displayed directly in the terminal

python3 -m nano_llm.chat --api=mlc \

--model Efficient-Large-Model/VILA1.5-3b \

--prompt '/data/images/lake.jpg' \

--prompt 'please describe the scene.'

The folder jetson-containers/data/images is where you can place the images you want to describe. Be sure to update the --prompt argument with the corresponding image filename, for example, --prompt '/data/images/lake.jpg’